Using a four-lane interface between the server NIC and ToR switch instead of a single-lane interface translates into four times more SerDes channels consumed in switch and NIC silicon, four times more interface real estate on NIC and switch hardware, and four times more copper wiring in the cables that connect them. A 40GbE link uses four 10-Gb/s physical lanes to communicate between link partners. The value of 25GbE technology is clear in comparison to the existing 40GbE standard, which IEEE 802.3 has defined as the next higher link speed after 10GbE (see the figure).

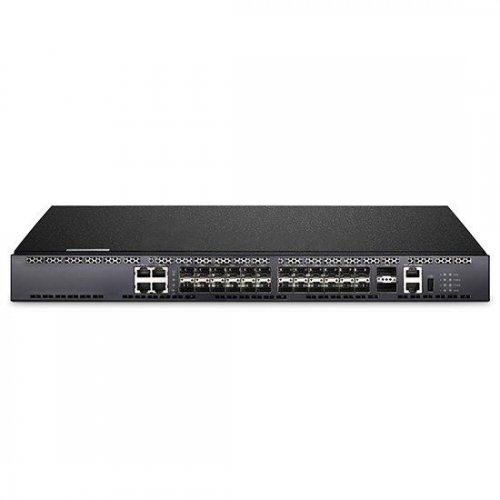

Employing 25GbE and 50GbE as ToR switch downlinks also mates seamlessly with the deployment of 100GbE ToR uplinks, because it can leverage the same 25-Gb/s per-lane SerDes technology in switch silicon and use the same front panel connector type. The Consortium’s 25GbE spec uses just one lane and the 50GbE spec uses two lanes, delivering a more optimal solution for next-generation connectivity between server and storage endpoints and the ToR switch. The latest 100GbE connection standards use four physical serializer/deserializer (SerDes) lanes to enable communication between link partners, with each of the four lanes running at 25 Gb/s. The 25/50GbE specification fully leverages existing IEEE-defined physical and link layer characteristics at 10GbE, 40GbE, and 100GbE. By opening up the 25/50GbE specification royalty-free to any vendor/consumer that joins, the Consortium provides the industry faster access to data-center interconnect innovation based on multi-sourced, interoperable technologies. The 25/50GbE standard provides more options for optimizing network interconnect architecture on top of already established IEEE 10/40/100GbE standards. The 25 Gigabit Ethernet Consortium was established to promote industry adoption of newly developed 25GbE and 50GbE specifications for faster and more cost-efficient connectivity between a data center’s server network interface controller (NIC) and top-of-rack (ToR) switch. This file type includes high resolution graphics and schematics when applicable. This challenge has led to the development of new Ethernet technologies, optimized to fine-tune network capacity to meet bandwidth demands of today and tomorrow. The traffic created by increasing numbers of tenants, applications, and services in data centers pushes the limits of standard 10 Gigabit Ethernet (10GbE) links. The rising scale of cloud computing, Big Data, and mega data centers has network administrators continually scrambling to recalibrate capacity requirements and balance those requirements with budget limitations.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed